Embodied AI Explained: How Intelligent Systems Interact with the Real World

As automation expands into increasingly complex environments, intelligence is no longer measured only by accuracy or speed, but by how well systems cope with uncertainty and real-world consequences. This new ability to perceive, adapt, and act in the physical world marks a major shift in Artificial Intelligence. Instead of existing only in code and data centers, AI is now stepping into the real world through a new paradigm known as Embodied AI.

What is Embodied AI?

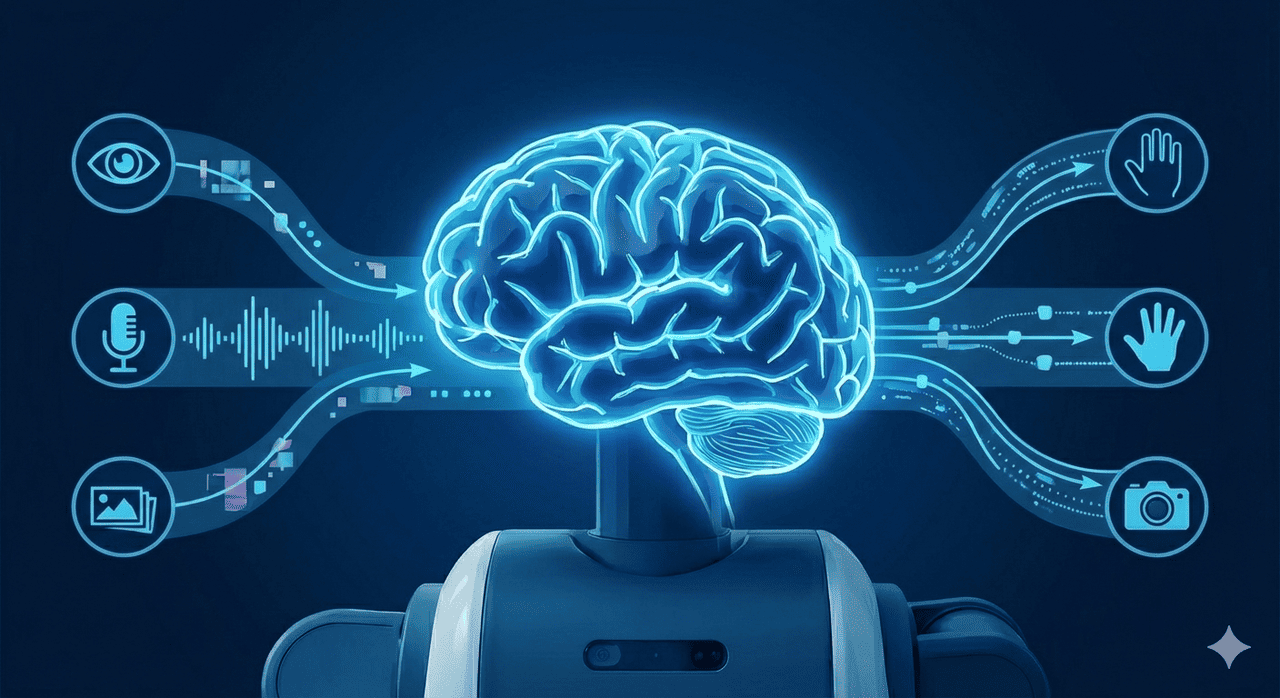

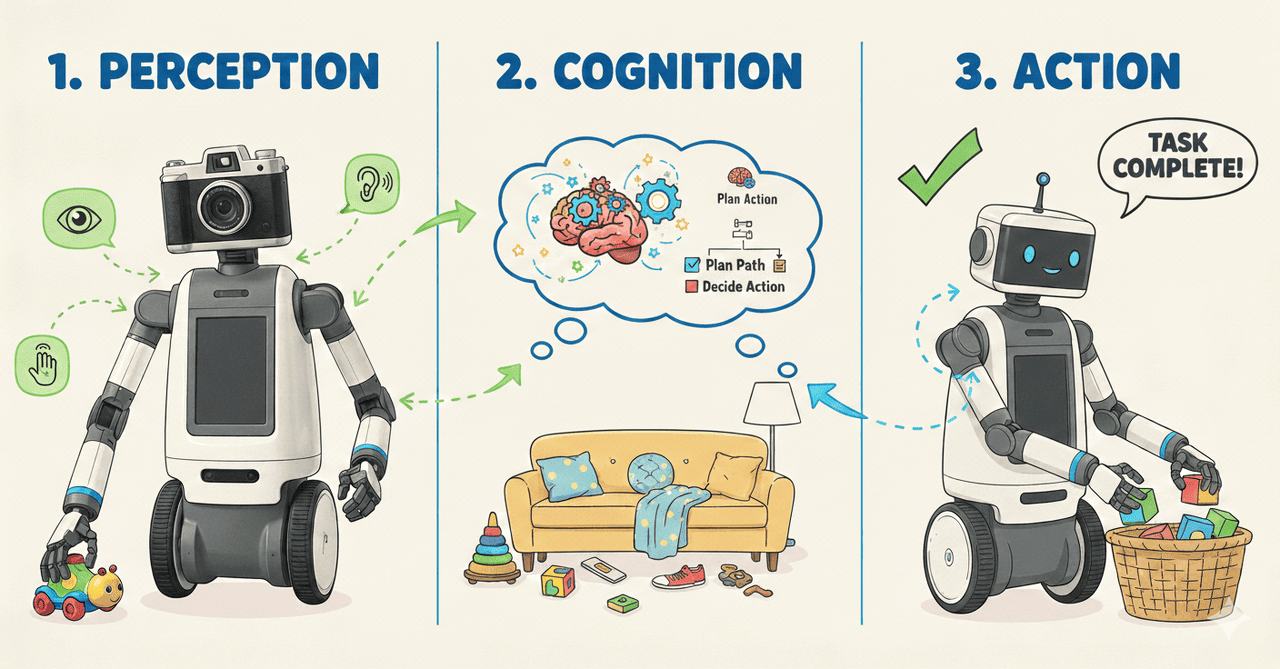

Unlike traditional software-based AI, Embodied AI integrates advanced AI models into physical entities, together with sensors, for them to make sense of the real world. This allows them to receive sensory feedback, which means they can observe, recognise, and detect things in their physical environment. It also makes use of machine learning and computing vision, for AI to extend into the physical realm of the world, beyond just processing & analysing data.

How Different Is This From Robots We Already Have?

While robots have existed for decades, most traditional robots operate using fixed rules and predefined workflows, performing well only in structured and predictable environments. In contrast, rather than relying solely on rigid instructions, Embodied AI learns from data, adapts to unstructured and dynamic environments, and generalizes to new tasks without explicit reprogramming. This allows embodied AI to make context-aware decisions, collaborate more naturally with humans, and handle real-world complexity with greater flexibility and autonomy.

How is Embodied AI built?

1. Pre-training

Pre-training involves feeding large datasets to AI models to equip them with general knowledge. Web data provides broad coverage of human behavior and common-sense knowledge, making them atuned to the wide variety of scenarios that could happen to them in real life. Real-world robotic data captures physical complexity and the uncertainty of reality. Additionally, Synthetic data generated from simulations and digital twins further supplements training by allowing controlled variation of environments and conditions. When enhanced with world models that simulate physics and spatial relationships, synthetic data improves realism, reduces hallucinations, and keeps model learning grounded in verifiable real-world contexts.

2. Post-training

Post-training focuses on refining model performance using simulation-based techniques. Synthetic environments enable testing across a wide range of scenarios, including rare edge cases, improving robustness and reliability. Reinforcement learning allows embodied AI systems to optimize behavior through trial and error, while imitation learning enables faster skill acquisition by learning from human demonstrations. Together, these methods help models adapt safely and efficiently before deployment in real-world settings.

3. Inference

During inference, trained models operate in real time to perceive their environment and make decisions. Computer vision enables accurate scene understanding and navigation, while large language models support natural language understanding and human–machine interaction. Vision language models integrate visual, linguistic, and sensor inputs to provide deeper contextual awareness, and their extension into action planning allows embodied AI systems to perform complex tasks and respond intelligently to dynamic environments.

What are its advantages?

1. Real-world Adaptability of AI

Embodied AI can learn by interacting with the real world rather than relying only on pre-loaded data. Because it can sense its environment, it is able to adapt its behavior beyond its initial programming. For example, if a delivery robot misjudges the distance to a step and bumps into it, its vision sensors detect the unexpected obstacle. Over time, the system learns to better estimate depth and adjust its path, allowing it to navigate similar environments more safely in future.

2. Improved Task Efficiency & Performance

Embodied AI is reshaping operations across multiple industries by automating physically demanding and repetitive tasks, improving service quality, and supporting maintenance activities. Examples of real-world applications include autonomous mobile robots in logistics and robotic arms in manufacturing. By directly interacting with the physical world, these systems can provide consistent labor support while improving speed, accuracy, and precision. Their ability to adapt to real-time conditions also allows workflows to be optimized dynamically, leading to higher productivity and reduced operational costs.

3. Safety & Reliablility

Embodied AI systems can significantly improve safety in environments where errors carry high risks. By leveraging techniques such as Model Predictive Control (MPC), these systems are able to anticipate future states and respond proactively to complex, non-linear, and rapidly changing conditions. This predictive capability is especially critical in applications like autonomous driving and robotic surgery, where real-time adaptation and reliable decision-making are essential to minimise accidents and ensuring safe operation.

How HPC-AI Can Support the Embodied AI Field

HPC-AI plays a critical role in advancing Embodied AI by providing the large-scale computing power required to train, simulate, and deploy intelligent physical systems. Embodied AI relies heavily on multimodal models that combine vision, language, and sensor data, as well as extensive simulation and reinforcement learning to handle complex real-world environments. High-performance GPUs and accelerated computing platforms enable faster model training, large-scale synthetic data generation, and high-fidelity digital twin simulations. In addition, HPC-AI infrastructure supports low-latency and reliable inference at runtime, which is essential for safety-critical applications such as autonomous vehicles, robotics, and smart manufacturing. By reducing training time, improving scalability, and enabling real-time decision-making, HPC-AI helps embodied AI systems become more capable, robust, and deployable at scale.