New Model Alert: GLM-5.1 is Now Available on HPC-AI Model APIs

We are excited to announce the integration of GLM-5.1, the latest flagship model from Zhipu AI, into the HPC-AI Model APIs platform. GLM-5.1 marks a pivotal shift in the AI landscape, moving from simple conversational chatbots to robust Agentic Engineering—capable of autonomous, continuous execution on complex tasks.

As AI infrastructure providers, we are thrilled to bring this "Long-Horizon" reasoning power to our developers with a highly competitive and transparent pricing model.

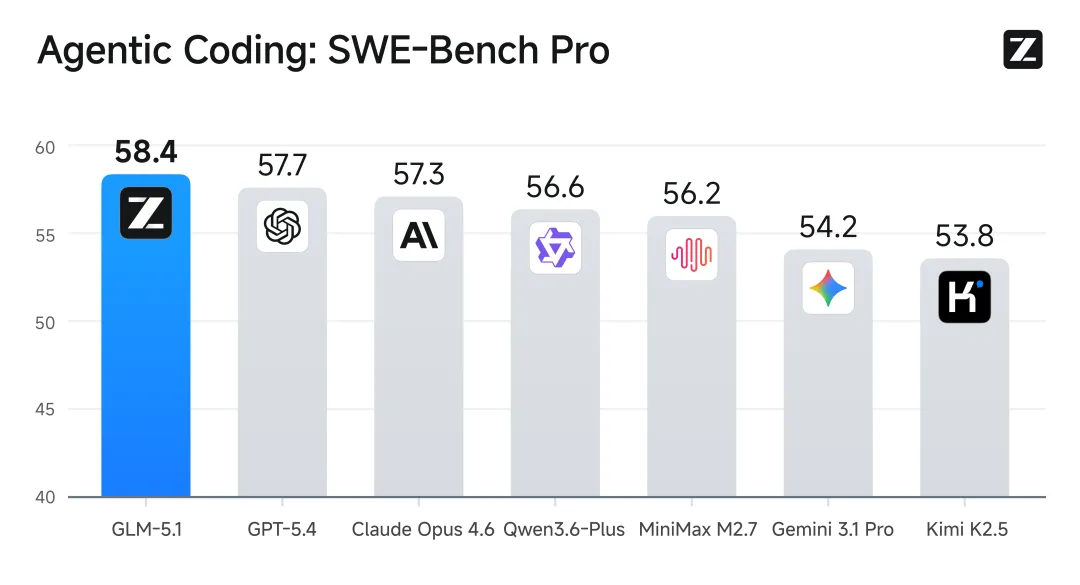

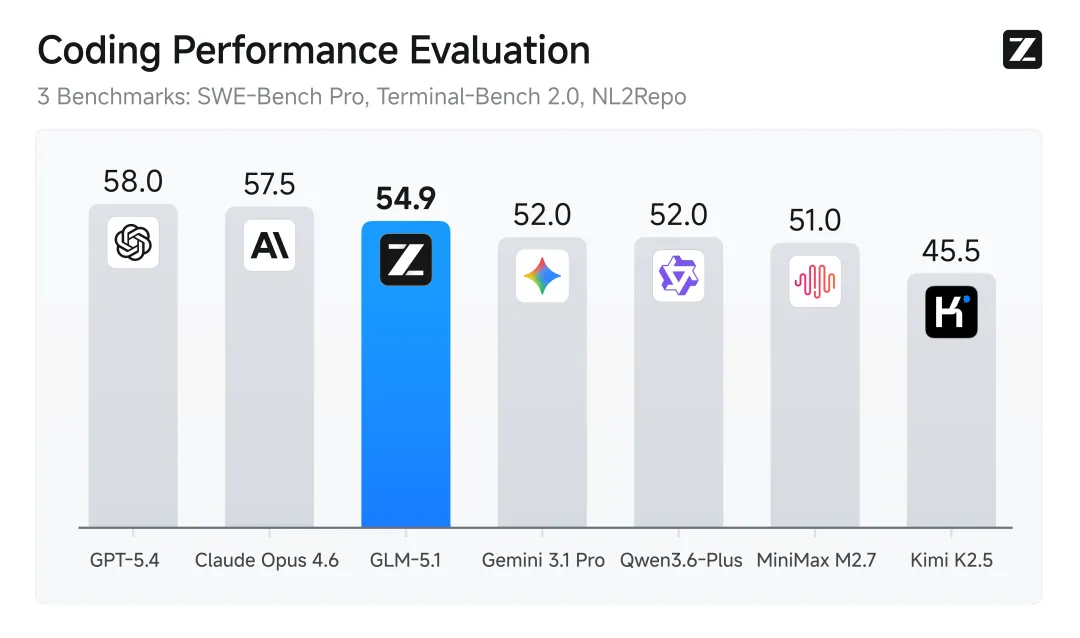

GLM-5.1: Leading Global Benchmarks in Intelligence

While traditional models excel at answering short queries, they often struggle with multi-step engineering tasks. GLM-5.1 is designed to break this ceiling:

- Autonomous Engineering: It can maintain productivity for up to 8 hours on a single complex goal, handling hundreds of reasoning steps and thousands of tool calls independently.

- SOTA Performance: GLM-5.1 rivals top-tier global models like Claude 3.5 Sonnet, achieving industry-leading scores in SWE-bench Pro and Terminal-Bench 2.0.

- Efficiency at Scale: A 744B parameter Mixture-of-Experts (MoE) architecture that delivers flagship intelligence with optimized inference speed.

Transparent & Competitive Pricing

At HPC-AI Model APIs, we believe cutting-edge power should be accessible. We are launching GLM-5.1 with a pricing structure optimized for high-volume agentic workflows, especially those utilizing context caching to reduce costs.

A Remarkable Milestone: From Zero to a Linux Desktop in 8 Hours

In a powerful demonstration of its agentic capabilities, GLM-5.1 successfully constructed a complete Linux desktop environment from scratch in just 8 hours, showcasing an unprecedented level of autonomous planning and execution.

In summary, GLM-5.1 represents a massive leap toward truly autonomous AI engineering, redefining what models can achieve in a single workday.

By accessing GLM-5.1 via our Model APIs, you benefit from:

-

Low Latency: High-performance inference infrastructure.

-

Reliability: Enterprise-grade uptime for long-running agentic tasks.

-

Cost Efficiency: One of the most aggressive price-to-performance ratios in the market today.

Get Started Today

GLM-5.1 is now available for all users via our Model APIs dashboard. Whether you are building autonomous coding agents, complex data analysis pipelines, or multi-modal UI assistants, GLM-5.1 provides the cognitive backbone you need at a fraction of the cost.