The Shift from Context to Coordination: Navigating Kimi 2.6, 2.5, and GLM-5.1

In the rapidly evolving landscape of foundation models, the metric for success is shifting. We have moved past the era where model quality was judged solely by benchmark scores or the sheer volume of tokens a model could ingest. Today, the frontier of AI development is defined by "agentic readiness"—the ability of a model to not just process information, but to execute logic and coordinate complex workflows. This evolution is perfectly encapsulated in the transition from Kimi 2.5 to Kimi 2.6, and the specialized trajectory of GLM-5.1.

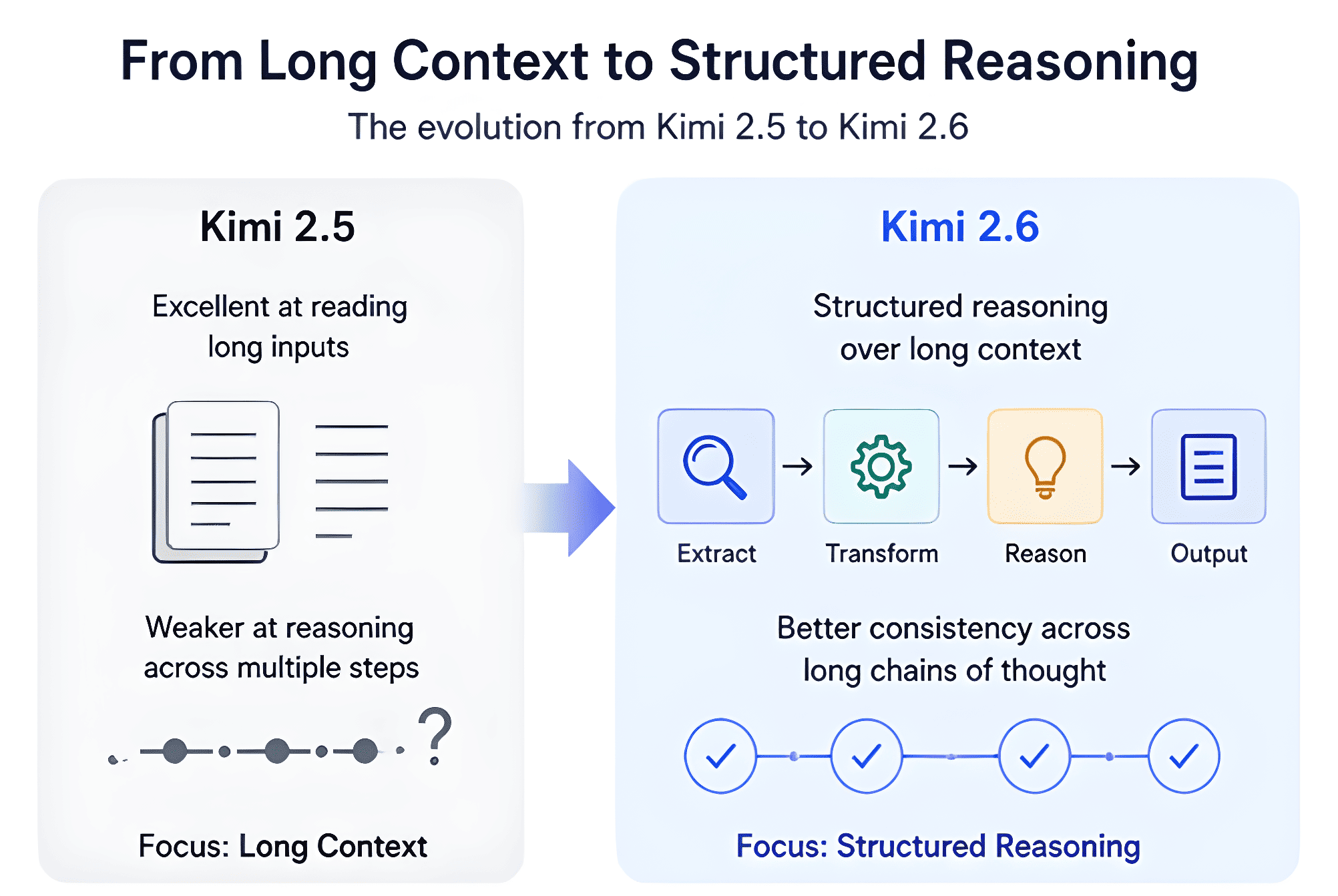

The Evolution of the Kimi Lineage: From Reading to Reasoning

To understand where we are going, we must look at where we started. Kimi 2.5 established itself as a heavyweight in the "long-context" era. It focused on the democratization of ultra-long document processing, offering a stable and cost-efficient solution for retrieval-heavy tasks. For developers building standard RAG (Retrieval-Augmented Generation) pipelines, Kimi 2.5 remains a reliable workhorse, providing a balanced trade-off between latency and the ability to maintain coherence over 100k+ token windows.

However, Kimi 2.6 represents a fundamental architectural pivot rather than a mere incremental update. While the previous version was excellent at reading long inputs, Kimi 2.6 is designed for structured reasoning over that context. The technical leap here is most visible in its "extract-transform-reason-output" pipelines. Instead of requiring developers to build complex external orchestration layers to manage multi-step logic, Kimi 2.6 internalizes this process. It introduces significantly improved Chain-of-Thought (CoT) stability, ensuring that logical consistency doesn't drift as the reasoning steps grow longer.

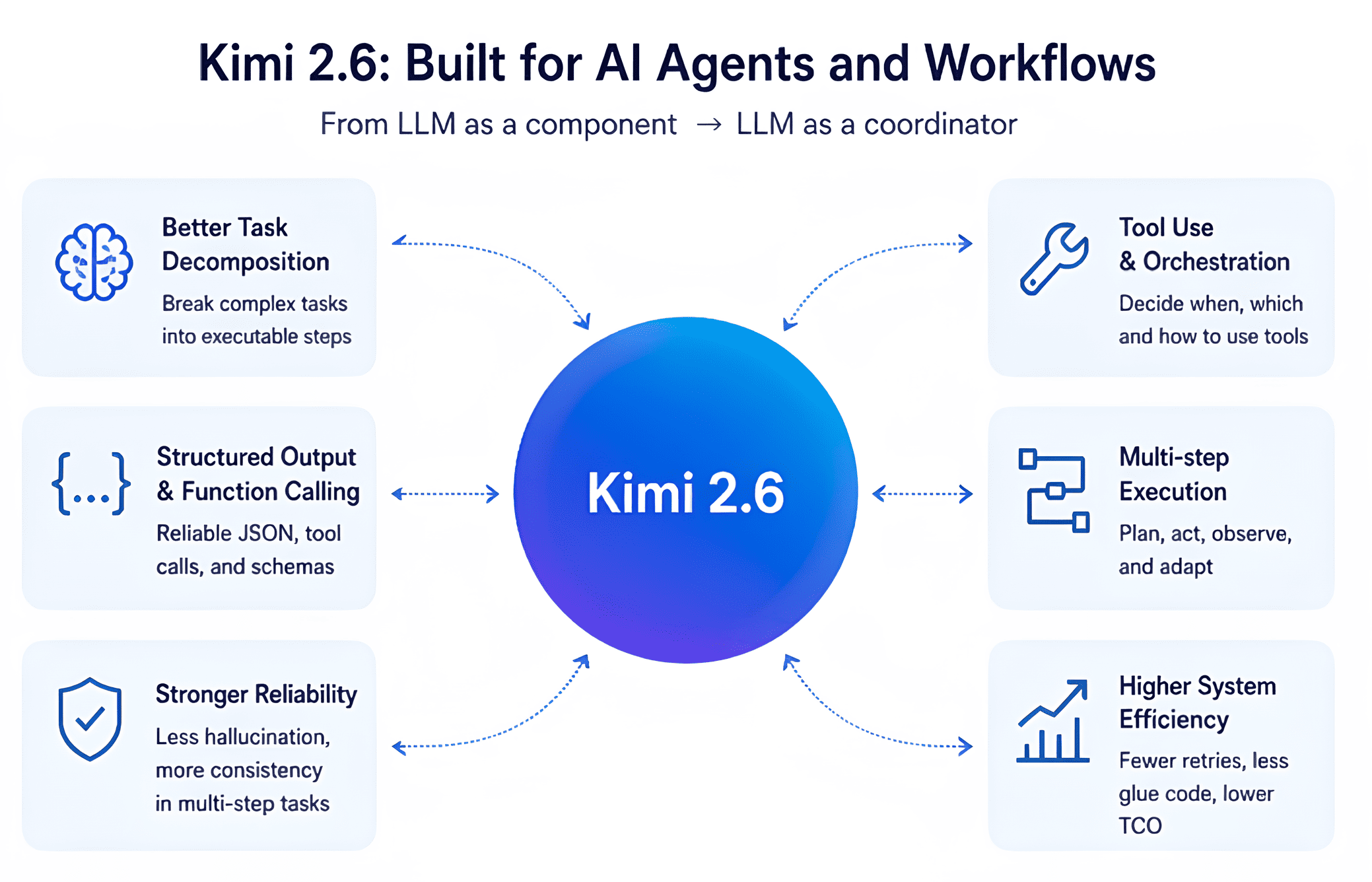

Agentic Readiness and Structured Outputs

For AI builders, the most practical upgrade in Kimi 2.6 is its native support for structured outputs and tool invocation. In version 2.5, getting reliable JSON or precise function calls often required extensive prompt engineering and "retry" loops. Kimi 2.6 minimizes this "glue code" by offering a more deterministic output format. This reliability transforms the LLM from a simple component in a software stack into a central coordinator. It is no longer just generating text; it is deciding when to call a tool, sequencing those actions, and accurately interpreting the resulting data to inform the next step.

GLM-5.1: The Push Toward Autonomy

While Kimi 2.6 focuses on making reasoning more controllable and structured for developers, GLM-5.1 leans into the philosophy of autonomous, long-horizon planning. If Kimi 2.6 is the ideal engine for a builder-controlled SaaS feature, GLM-5.1 is designed for systems-level AI. It prioritizes continuous execution and iterative planning, making it a formidable choice for enterprise workflows that require the model to operate with a degree of self-driven agency.

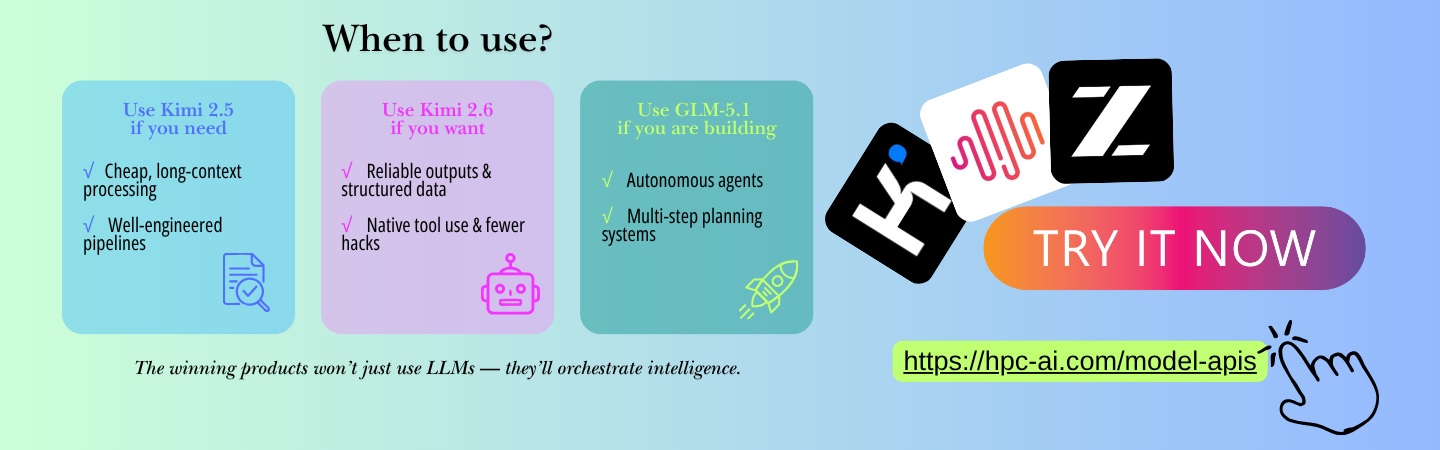

Strategic Implementation: Choosing Your Engine

The decision of which model to integrate depends entirely on the architectural needs of your application. Kimi 2.5 remains the gold standard for high-volume, cost-sensitive tasks like summarization and basic retrieval where the pipeline is already well-engineered.

Conversely, Kimi 2.6 should be the default choice for startups building AI agents or workflow automation tools. The slight increase in compute per request is offset by a massive reduction in system-wide costs; fewer hallucinations and more reliable structured outputs mean less spent on infrastructure for validation and retries.

For those operating on the bleeding edge of autonomous systems—where the model must navigate open-ended tasks with minimal human intervention—GLM-5.1 offers the necessary long-horizon reasoning capabilities.

Final Thoughts for AI Builders

The release of these models signals a clear message: the complexity of AI engineering is moving from the application layer into the model itself. We are entering an era where "intelligence" is measured by how well a model operates inside a system rather than how well it chats.

Kimi 2.5 scaled context; Kimi 2.6 structures that intelligence; GLM-5.1 seeks to make it autonomous. For the modern AI builder, the goal is no longer just to use an LLM, but to orchestrate an intelligent system that is reliable, deterministic, and agent-ready.

How will these improvements in structured reasoning change the way you design your next multi-step AI workflow? Test them now.